Understanding GSC Data Sampling and the Long-Tail Impact

Google Search Console does not present a complete dataset within its standard web interface. To conserve processing resources and protect user privacy, GSC aggregates smaller, lower-volume queries into an “anonymized queries” bucket or simply excludes them from the primary reports.

This process, known as data sampling, heavily distorts an SEO’s view of the long-tail search landscape, frequently obscuring up to 50% of a domain’s total search performance.

The Risks of Incomplete Analytics

Relying exclusively on the native GSC interface leads to flawed decision-making. You may observe 10,000 total site clicks in the top-level chart, but upon summing the clicks for all individually listed queries, the total only reaches 6,000. Operating without visibility into the queries driving the remaining 40% of your traffic means you are optimizing based on incomplete data.

Revealing the Hidden Long-Tail

The Sampling Impact tool is designed to quantify this data gap and provide strategies for navigating around standard UI limitations.

1. Visualizing the Data Gap

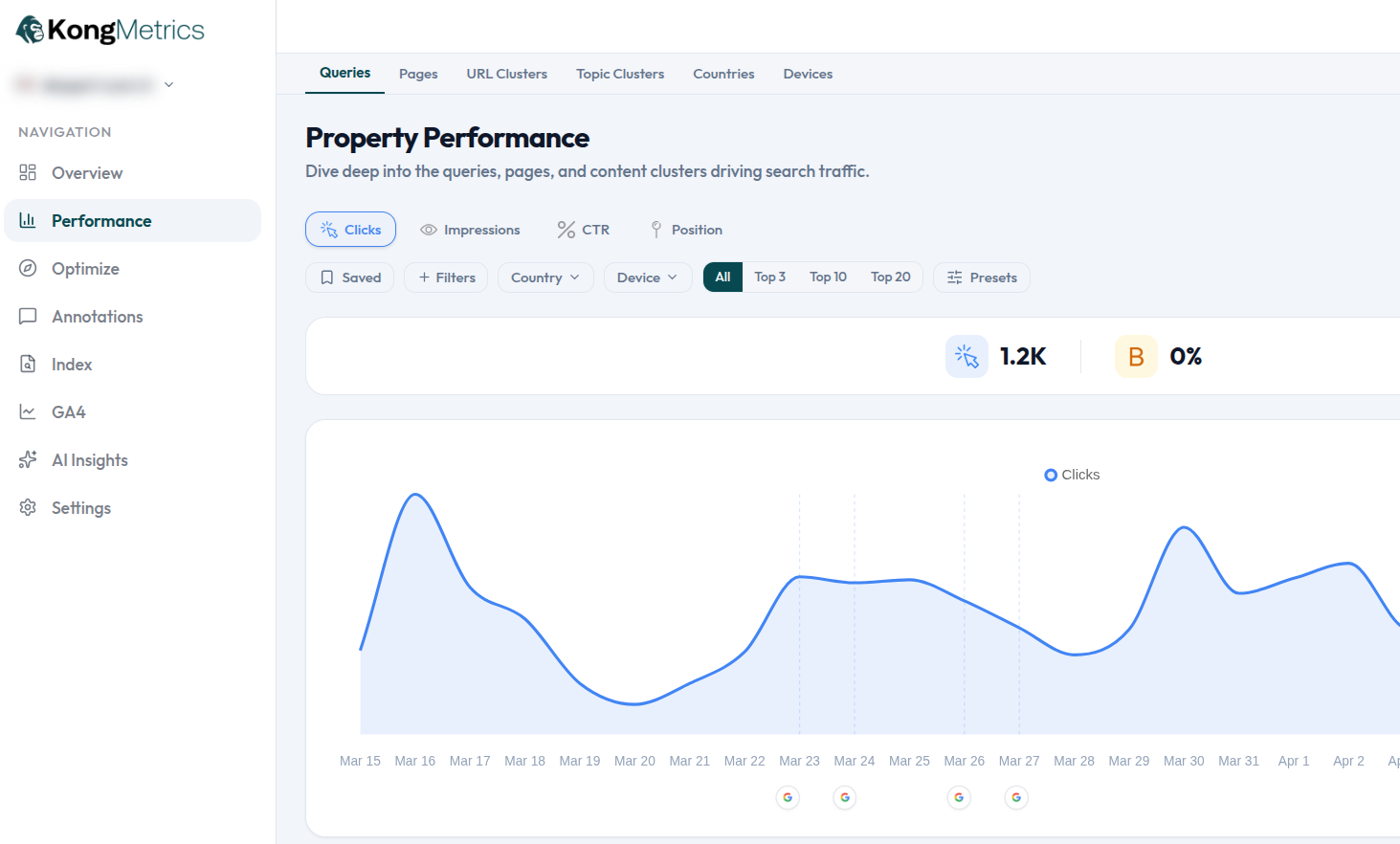

The platform clearly contrasts your absolute total traffic metrics against the sum of the visible, un-sampled query data. This immediately quantifies the exact percentage of your traffic that resides within the hidden long-tail.

2. Bypassing UI Limitations

By leveraging the official GSC API and integrating with data warehousing solutions like BigQuery, Kong Metrics extracts a significantly higher volume of raw data than is accessible via the standard web interface, drastically mitigating the impact of sampling.

3. Long-Tail Query Discovery

With enhanced access to unsampled data sets, SEO teams can uncover hyper-specific, low-volume, yet high-converting long-tail queries. These are highly valuable search terms that competitors—who rely solely on standard GSC exports—cannot see or optimize for.